Video Tutorial - How to Create a Secure JSF JPA Application

Published on 17 Apr 2020

by Fabio Turizo

Topics:

Java EE,

Security,

JakartaEE

|

6 Comments

How Using the Payara Platform in the Cloud Impacts DevOps

Published on 14 Nov 2019

by Fabio Turizo

Topics:

DevOps,

Cloud-native

|

0 Comments

At Payara Services, we have long been advocates of the benefits of using DevOps practices not only in the development of our products (like Payara Server & Payara Micro), but also in the core of our expert advice to our user base with our blog containing arguments for using DevOps practices, details of DevOps tools and new developments that benefit it.

Fabio Turizo's First Time at Oracle Code One

Published on 02 Oct 2019

by Fabio Turizo

Topics:

Conferences

|

0 Comments

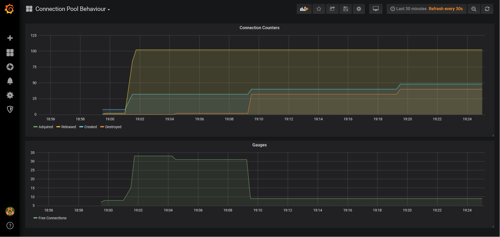

Payara Enterprise Support Success Story: JDBC Connection Pool Behaviour

Published on 13 Sep 2019

by Fabio Turizo

Topics:

Payara Support,

Payara Enterprise

|

1 Comment

As part of the Payara Enterprise Support services that we deliver to customers on a daily basis, giving expert advice and clarifying how the internals of the products of the Payara Platform work is one of the most common scenarios we encounter. Here's' a story about the advice we gave to one of our customers regarding the behavior of JDBC Connection Pools in Payara Server.

My JConf Colombia 2019 Impressions

Published on 23 Jul 2019

by Fabio Turizo

Topics:

JConf,

Conferences

|

0 Comments

Fine Tuning Payara Server 5 in Production

Published on 21 May 2019

by Fabio Turizo

Topics:

Production Features,

JVM,

Payara Server 5

|

13 Comments

One of the biggest challenges when developing applications for the web is to understand how they need to be fine-tuned when releasing them into a production environment. This is no exception for Java Enterprise applications deployed on a Payara Server installation.

Running a Payara Server setup is simple: download the current distribution suited for your needs (full, web); head to the /bin folder and start the default domain (domain1)! However, keep in mind that this default domain is tailored for development purposes (a trait inherited from GlassFish Server Open Source). When developing a web application, it’s better to quickly code features, deploy them quickly, test them, un-deploy (or redeploy) them, and continue with the next set of features until a stable state is reached.

(last updated 06/04/2021)

Microservices for Java EE Developers

Published on 18 Apr 2019

by Fabio Turizo

Topics:

Payara Micro,

Microservices

|

13 Comments

Nowadays, the concept of microservices is more than a simple novelty. With the advent of DevOps and the boom of container technologies and deployment automation tools, microservices are changing the way developers structure their applications. With this article, our intention is to illustrate that microservices are a valid option for Java/Jakarta EE developers and how Payara Micro is a robust platform to reach that goal.

Understanding Payara Services OpenJDK Support Benefits

Published on 30 Nov 2018

by Fabio Turizo

Topics:

Payara Support,

OpenJDK

|

0 Comments

Starting this year, customers that come on board with our support services also have access to commercial OpenJDK support included, thanks to the partnership between Payara Services and Azul Systems' Enterprise Support! If you are interested in Payara Enterprise or our Migration & Project Support but are hesitating and have some doubts about what value this service brings to your organization and environment, this article may help dispel them and give you much needed decision-making clarity.

Microservices for Java EE Developers (Japanese)

Published on 19 Jul 2018

by Fabio Turizo

Topics:

Java EE,

Payara Micro,

Microservices,

MicroProfile,

Japanese language

|

0 Comments

Fine Tuning Payara Server in Production (Japanese)

Published on 12 Jul 2018

by Fabio Turizo

Topics:

Production Features,

Docker,

How-to,

JVM,

Japanese language

|

0 Comments