Making Use of Payara Server's Monitoring Service - Part 2: Integrating with Logstash and Elasticsearch

Originally published on 24 Aug 2016

Last updated on 25 Aug 2016

by Fraser Savage

by Fraser Savage

Following the first part of this series of blog posts, you should now have a Payara Server installation which monitors the HeapMemoryUsage MBean and logs the used, max, init and committed values to the server.log file. As mentioned in the introduction of the previous post, the Monitoring Service logs metrics in a way which allows for fairly hassle-free integration with tools such as Logstash and fluentd.

Often, you might find it useful to store your monitoring data in a search engine such as Elasticsearch or a time series database such as InfluxDB. One way of getting the monitoring data from your server.log into one of these datastores is to use Logstash.

This blog post covers how to get monitoring data from your server.log file and store it in Elasticsearch using Logstash.

Setting up Logstash

Logstash can be downloaded in a variety of forms from elastic.co. This blog assumes that Logstash is going to be used through extracting the tar/zip archive for version 2.3.4, so work will be done in the directory which Logstash is extracted to.

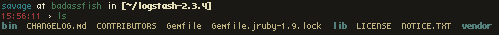

After extracting the archive you should have a directory containing the files shown below:

Next, the logstash configuration file needs to be created. For simplicity's sake the file created can be called logstash.conf and placed in this directory. The config file will use the input,filter and output sections of the config file; you can read more about the structure of a Logstash config file here.

The 'input' section

To have Logstash take its input as the server.log file the following can be used for the input section:

input {

file {

path => 'path/to/the/server.log'

codec => multiline {

pattern => "^\[\d{4}"

what => "next"

}

start_position => "beginning"

}

}

This uses the file plugin to watch the server.log file and pass each event to the filter section, starting from the beginning of the file.

Logstash by default will treat each line as an event, which is problematic for Java as many Java log entries take up multiple lines. To deal with this, the multiline codec can be used. With the default logging configuration, each Payara Server log entry begins with [YYYY so the pattern ^\[\d{4} can be matched with the beginning of each log entry. Setting what => "next" indicates that the pattern matched belongs to the next log entry and will combine them into a single event.

The 'filter' section

With multiline input now being merged and passed in as single events the filter section can be used with a few plugins to get the monitoring data from the logs.

filter {

if "JMX-MONITORING:" in [message] {

mutate {

gsub => ["message", "\n", ""]

}

grok {

match => {

"message" => "\[%{TIMESTAMP_ISO8601:timestamp}\] \[%{DATA:server_version}\] \[%{LOGLEVEL}\] \[\] \[%{JAVACLASS}\] \[%{DATA:thread}\] \[%{DATA}\] \[%{DATA}\] \[\[ *PAYARA-MONITORING: %{DATA:logmessage} \]\]"

}

}

date {

match => [

"timestamp", "ISO8601"

]

target => "@timestamp"

}

kv {

source => "logmessage"

}

} else {

drop { }

}

}

First, this checks that the event message contains the string literal JMX-MONITORING:. If it doesn't, the event is dropped by Logstash using the drop plugin since we only want monitoring data to be stored.

If the event message does contain it then Logstash should be looking at an event that contains monitoring data. First, the mutate plugin is used to remove any newline characters from the message, then the grok plugin is used to match the message against the pattern given and extract data out of it.

The pattern given to grok should match anything of this structure:

[2016-08-16T16:02:40.602+0100] [Payara 4.1] [INFO] [] [fish.payara.jmx.monitoring.MonitoringFormatter] [tid: _ThreadID=77 _ThreadName=payara-monitoring-service(1)] [timeMillis: 1471359760602] [levelValue: 800] [[

JMX-MONITORING: committedHeapMemoryUsage=417333248 initHeapMemoryUsage=264241152 maxHeapMemoryUsage=477626368 usedHeapMemoryUsage=122405160 ]]

Grok extracts the data from the match of the patterns given to it, such that in this pattern the log message gets placed in a logmessage field, while the timestamp gets placed in a timestamp field. The timestamp gets stored in the l;timestamp field as 2016-08-16T16:02:40.602+0100, server version in the server_version field as

Payara 4.1, thread data in the thread field as tid: _ThreadID=77 _ThreadName=payara-monitoring-service(1) and the log message is stored in the logmessage field.

After grok has parsed the event message, the date plugin is used to match the timestamp field and copy it into the @timestamp field for the event, so that the time the entry was logged is used instead of the time logstash processed the event.

The final plugin used inside this branch of the conditional statement is kv, a filter plugin that is useful for parsing messages which have a series of key=value strings. By giving the logmessage field as the source it will map the key-value pairs to fields in the event.

The 'output' section

This section of the config handles how and where logstash outputs the event it's processing. An output section might look like the following:

output {

# This stdout plugin is optional, don't use it if you don't want output to the shell logstash is running in!

stdout {

codec => "rubydebug"

}

elasticsearch {

hosts => "localhost:9200"

}

}

The first plugin in this section is stdout and it is being used with the rubydebug codec. This plugin outputs the event data to the shell using the awesome_print library. Optionally there is also a json codec. This plugin isn't necessary but it useful to see the form that events have when they are stored in Elasticsearch.

The second is the elasticsearch plugin and uses HTTP to store logs in Elasticsearch. It's designed to make it easy to use the data in the Kibana dashboard and is recommended by elastic. The hosts setting has been given the value of localhost:9200 so that it will send the output to the URL that Elasticsearch runs with out-of-the-box.

Setting up Elasticsearch and running Logstash

Like Logstash, Elasticsearch can be downloaded in a number of forms from elastic.co. Again, the blog assumes that either the tar or the zip archive for version 2.3.5 (or similar) is downloaded and that work is done in the directory extracted to.

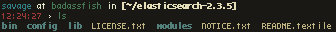

Extracting the archive should yield the following:

To get Elasticsearch up and running all that needs done is to run:

> ./bin/elasticsearch

Provided that runs successfully you now have Elasticsearch set up for storing monitoring data! Once Elasticsearch is ready Logstash can be run to start filtering the events and storing them as structured data in Elasticsearch. Changing to the directory Logstash was extracted to in a new shell the command that needs to be run to get Logstash started using the config file is:

> ./bin/logstash -f logstash.conf

To check the config is valid, logstash can be passed the -t option although it then needs to be run again without the option for the pipeline to start. Logstash should then start the pipeline and events should be output to the shell if the stdout plugin was used.

Related Posts

What’s New in the October 2024 Payara Platform Release?

Published on 09 Oct 2024

by Luqman Saeed

0 Comments

We're happy to announce the latest release of the Payara Platform, bringing significant improvements and updates across our Community and Enterprise editions (download Payara Enterprise trial here). This release focuses on enhancing security, ...

Continuous Integration and Continuous Deployment for Jakarta EE Applications Made Easy

Published on 25 Mar 2024

by Luqman Saeed

1 Comment